The Media Industry Needs Better AI Benchmarks

Every day, technical leaders across media are choosing which AI models to trust with their video workflows. But the benchmarks guiding those decisions don't measure anything close to what actually matters in production. The result: misallocated budgets, brittle deployments, and a widening gap between what models promise and what they deliver. To understand why, it helps to look at how we got here.

AI is beginning to understand video

For most of the industry’s history, video has been “understood” only through human effort. In the tape era, that meant analog logging and annotation. In the digital era, we massively scaled video storage and distribution. But understanding was still gated by human perception and reasoning i.e. humans (e.g., producers, editors) doing the work of tagging, describing, and organizing so content could be found and used by systems.

This has now fundamentally changed.

Multimodal foundation models can now ingest video directly and produce valuable outputs e.g., what it’s about, how it feels, why it works, where the key moments are. What started as simply improving tagging in the 2010s has now evolved to systems that makes video computable i.e. systems (e.g., Qwen, Gemini, GPT-5) doing the work of tagging, describing, and organizing so content could be found and used by humans.

Benchmarks have been at the heart of this progress, enabling us to track improvements and set a direction for future innovation.

For example, ImageNet (released in 2009) and the ILSVRC leaderboard (running annually starting on 2010) didn’t just measure progress in computer vision. They effectively defined what “progress” meant for over a decade.

This brings us to an old saying.

For better or worse, benchmarks define a field- Anonymous

The question then becomes: where are we today in how we benchmark video understanding?

The benchmark gap: today’s video benchmarks don’t reflect media reality

First things first - a benchmark is simply a measurement created by the field that defines: this is what “good” looks like. Fundamentally, it boils down to two core components:

- Data (what kinds of videos?)

- Tasks (what are we asking the model to do?)

Get it right, and it creates a shared target that accelerates real progress. Get either wrong, and the leaderboard becomes a mirror that flatters but doesn’t lead to real outcomes.

Unfortunately, today both data and tasks in most video benchmarks are misaligned with real-world needs of the media industry.

From a data perspective:

- Video benchmarks focus on short, trimmed clips that are often 10-30 seconds long.

- Meanwhile, the media industry uses short-form (ads, promos, social) and long-form (episodes, sports, news, films) videos with runtimes ranging from minutes to hours that have complex elements, such as pacing and narrative.

From a task perspective:

- Video benchmarks focus on short, localized tasks centered on objects/actions.

- Meanwhile, the media industry focuses on higher-order semantics over longer content (e.g., meaningful moments, promo selection, narrative summaries, genre/mood/themes, intent/message) with long-range dependencies.

Why this benchmark gap is now an industry risk

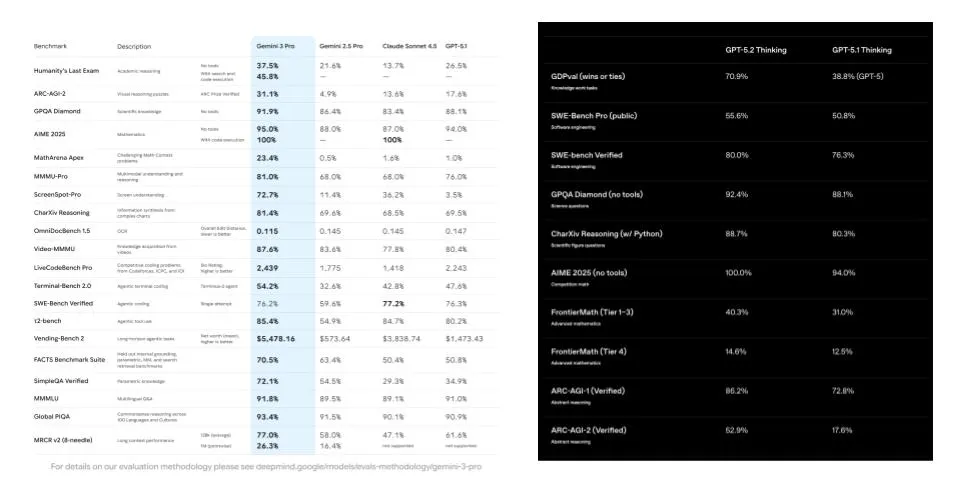

If you’re a technical leader in media, the benchmark gap isn’t academic - it’s a decision-making hazard. For example, if I use Video-MMMU to choose between Gemini 2.5 Pro (83.6%) and GPT-5 (84.6%), GPT-5 appears to be the winner. But if this benchmark’s task doesn’t match what I need from my production system, that “win” is misleading. This why this benchmark gap is directly distorting strategy and decision making.

For short-term risks, over the past 5 years of working with technical leaders building multimodal systems, we’ve learned just how difficult it can be to get started due to the lack of clear benchmarking data to make (relatively) simple decisions, such as:

- “Which model works best for our video and workflows?”

- “What’s the real cost of processing our video at scale for our workloads?”

- “How long does it take to process my archive of video?”

For long-term risks, we’ve observed the industry adopt two common misconceptions due to the lack of industry specific benchmarks to ground the conversation:

- Misconception #1: “Models are good enough.” Again, most benchmarks for these models are not representative of the real data and tasks in practice so following “progress” on existing benchmarks is misleading. From experience, we’ve worked with leaders who often end up discovering this benchmark gap the hard way: through costly human review, brittle heuristics, and downstream consistency issues when these models are actually deployed in production.

- Misconception #2: “Models will get better over time.” Models will improve on general tasks, but benchmarks drive this progress and there is a gap on benchmarking what media cares about most. If media-focused data and tasks aren’t on the scoreboard, they won’t be improved at the speed or the direction the industry expects.

This is why we the media industry needs a shared target that lets industry, academia, and model providers align on what matters, so progress becomes predictable, comparable, and deployable.

MediaPerf: an industry-driven benchmark for AI video understanding in media

Today, we are excited to introduce MediaPerf: a benchmark for video understanding of multimodal foundation models focused on the real data + tasks technical leaders and practitioners within the media industry are building and deploying in production.

We’re building MediaPerf from a position of hard-earned perspective: years spent building multimodal systems for the enterprise since 2021, and experience learning what it takes to make benchmarks valuable, scalable, and ecosystem-shaping (some of our previous efforts in this include MLPerf, DataPerf, and the Dollar Street dataset).

With this in mind, MediaPerf is built around three core commitments:

- Representative data: MediaPerf is grounded in videos that are representative of those used in real media workloads. We are launching with short-form video today, with a roadmap to include longer-form video in the future.

- Representative tasks: MediaPerf measures performance on tasks that are powering most use cases in the media industry today. We are launching with tagging and summarization, with a roadmap to include search/retrieval and workflow-oriented tasks in the future.

- Representative measurement: MediaPerf measures metrics that matter for deploying real systems. Concretely, in addition to task performance, this benchmark also measures costs and latency/throughput on these tasks.

The value of MediaPerf

MediaPerf is designed to be useful on day one. Not just as a leaderboard that helps inform decision making, but as an accelerator for technical teams building production systems with these multimodal models.

- No more guessing on which model to pick: You’ll be able to quantitatively compare models on real tasks using a standardized evaluation and reporting that makes quality/cost/latency tradeoffs explicit.

- An open-source, production-grade codebase to start from: The open-source codebase is designed to represent meaningful engineering work to help you and your team get started (roughly what 2–3 engineers could build in a quarter). It includes a modular generation and evaluation framework for most popular multimodal foundation models today so you can fork, adapt, and deploy.

- A fully labeled dataset you can use commercially to validate and fine-tune: Evaluation data is shared under CC-BY permitting commercial use. This means that you can:

- Verify the reported results

- Run your own custom evaluations

- Use the dataset to fine-tune or adapt models for media-specific tasks

Get involved

Benchmarks don't just measure a field - they define it. MediaPerf will only reflect what media actually needs with help and support from the community. Here's how to get started

- Spread the word: If this resonates, share this blog and our website (mediaperf.org) with technical leaders, research teams, and model partners.

- Stay up to date: Sign up for our email list at mediaperf.org to get the latest results, new benchmarks, and community updates.

- Contribute directly: If you want a hand in shaping what this benchmark becomes, email us at info@mediaperf.org.Ways to contribute today:

- Contribute to our open-source GitHub repo (see our issues and known limitations pages)

- Contribute data and/or licenses for benchmarking purposes (videos, labels, summaries, etc.)

- Join ongoing discussions in our working group and apply to join our benchmark governance board: info@mediaperf.org

For general feedback or questions, reach out to info@mediaperf.org.

.webp)